Hello everyone,

I would like to get some advice regarding the optimal Veeam architecture for a two-site environment.

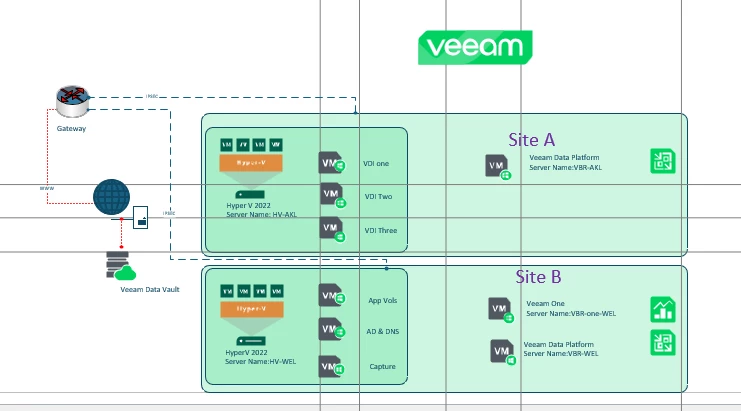

Infrastructure

-

Hyper-V environment

-

Two locations:

-

Vienna

-

Novi Sad

-

-

Sites connected with 1 Gbps IPSec tunnel

-

Repository available in both locations

Current components:

-

Backup Server

-

Hyper-V hosts acting as on-host proxies

-

Repository Vienna

-

Repository Novi Sad

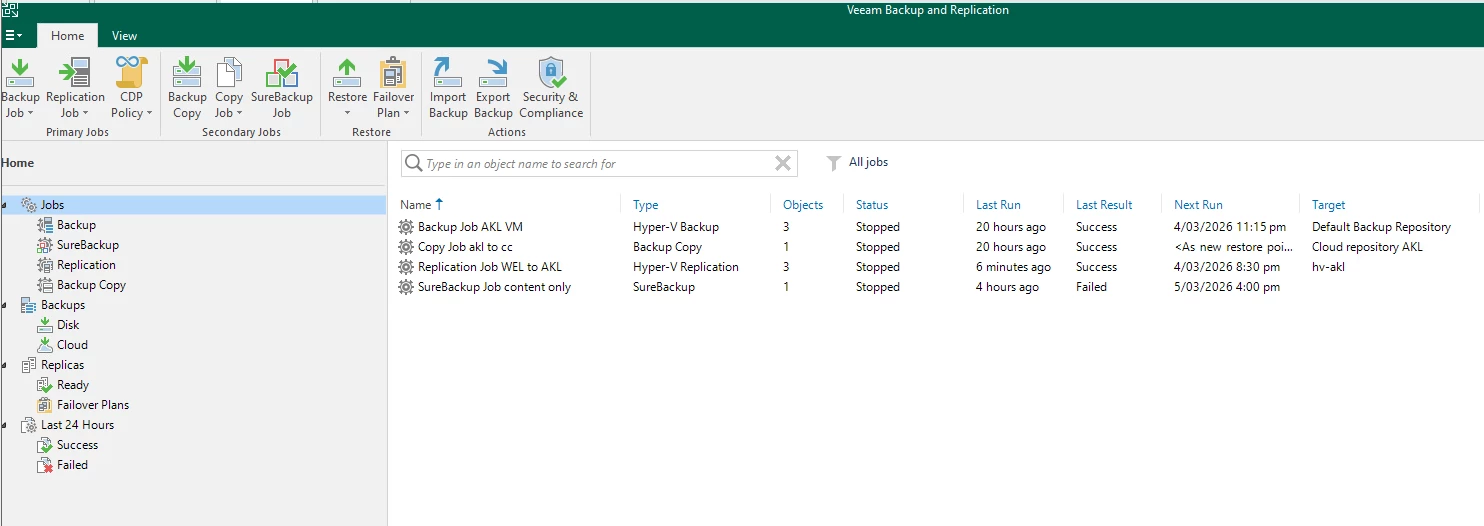

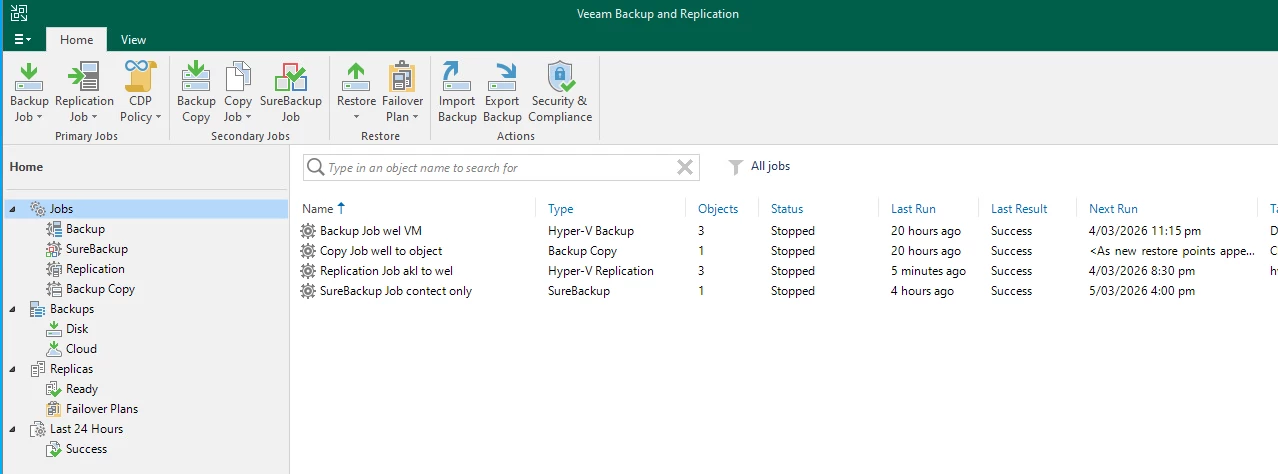

Current design

Right now the backup jobs run like this:

VMs from both locations → backup to Vienna repository

Then we run Backup Copy jobs from:

Vienna Repository → Novi Sad Repository

This means that VMs located in Novi Sad follow this path:

Novi Sad VM → Vienna Repo → Backup Copy → Novi Sad Repo

So effectively the data crosses the WAN twice.

Example

VM located in Novi Sad:

-

Backup goes NS → Vienna

-

Backup Copy goes Vienna → NS

Observation

During the first full backup we see relatively low throughput (~7 MB/s) and Veeam reports Network bottleneck.

This is expected since the traffic goes through an IPSec tunnel.

Question

Would it be a better design to do the following:

-

Backup Vienna VMs → Vienna repository

-

Backup Novi Sad VMs → Novi Sad repository

-

Use Backup Copy jobs between the repositories for DR