Since we added Linux Repositories to our Veeam Backup environment in my post from last week, why not add some Linux Proxies as well? I will show you how in this post. And trust me…this one will be far less painless. But adding Repositories wasn’t so bad, was it? 😏

System Requirements

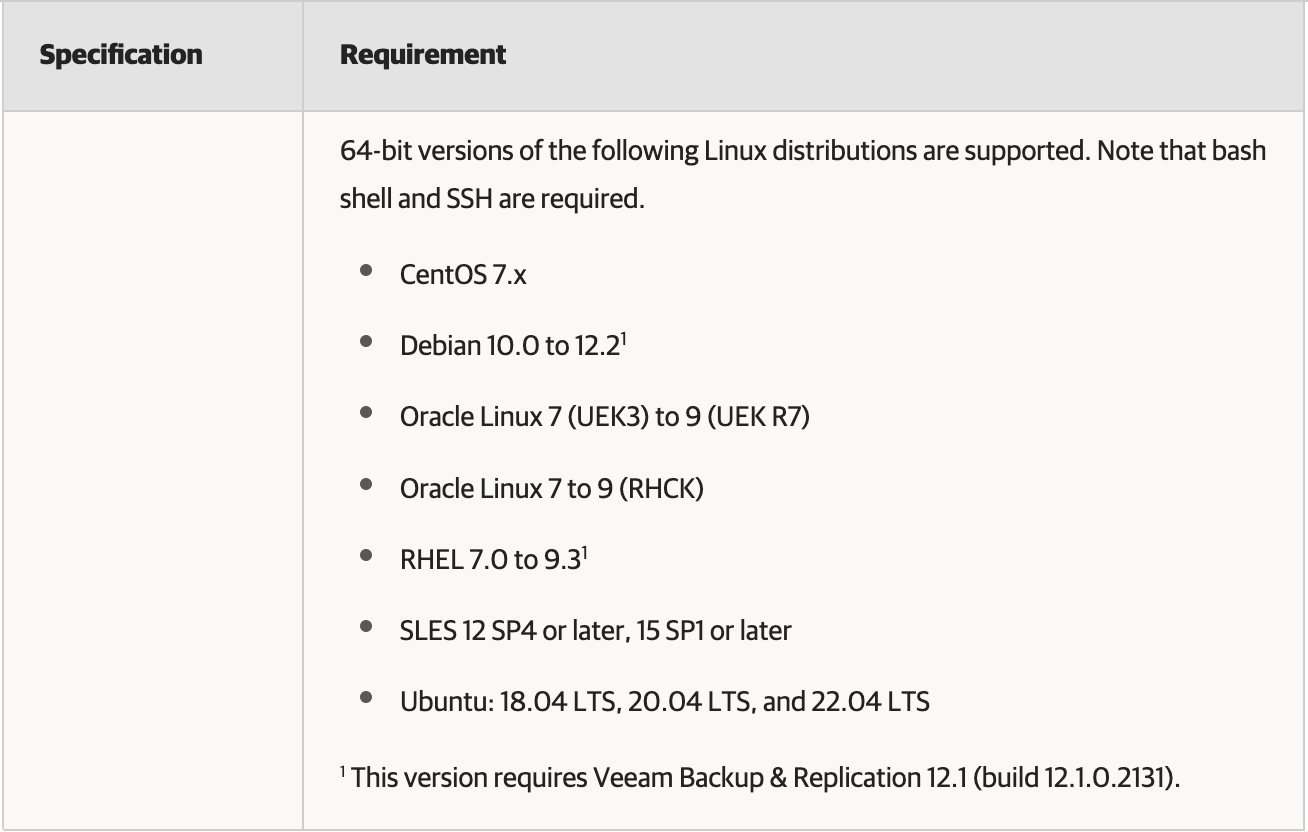

The first thing you need to do is check what OS's, hardware, and software are supported. Supported Linux OS's are exactly the same as with Repositories and are listed below:

As far as hardware goes, your server should have a minimum 2-core CPU, plus 1 core per 2 concurrent tasks (take note, this is a peformance enhancement since v12, up from 1 core per 1 concurrent task); and minimum 2GB RAM, plus 500MB per concurrent task. But for best performance and to run multiple Jobs and tasks, you should have much more Cores and RAM than the minimum. As with the Veeam Repository, look to the Veeam Best Practice Guide for actual Proxy sizing guidelines.

The remaining requirements depend on the Backup Transport Mode you plan to use – Direct Storage (DirectSAN or DirectNFS), Virtual Appliance (hotadd), or Network (nbd).

- For Direct Storage mode, the Proxy should have direct access to the storage the source (production) VMs are on. The open-iscsi and multipathing packages also need to be installed (both are pre-installed by default on standard Ubuntu installs). If using DirectNFS, the NFS Client, nfs-common (Debian), and nfs-utils (RHEL) packages need to be installed.

- If you choose Virtual Appliance mode, then the Proxy VM(s) must have access to the source VM disks it processes. Also, the VM Proxies must have VMware Tools installed (for vSphere Backups) as well as have a SCSI 0:X Controller.

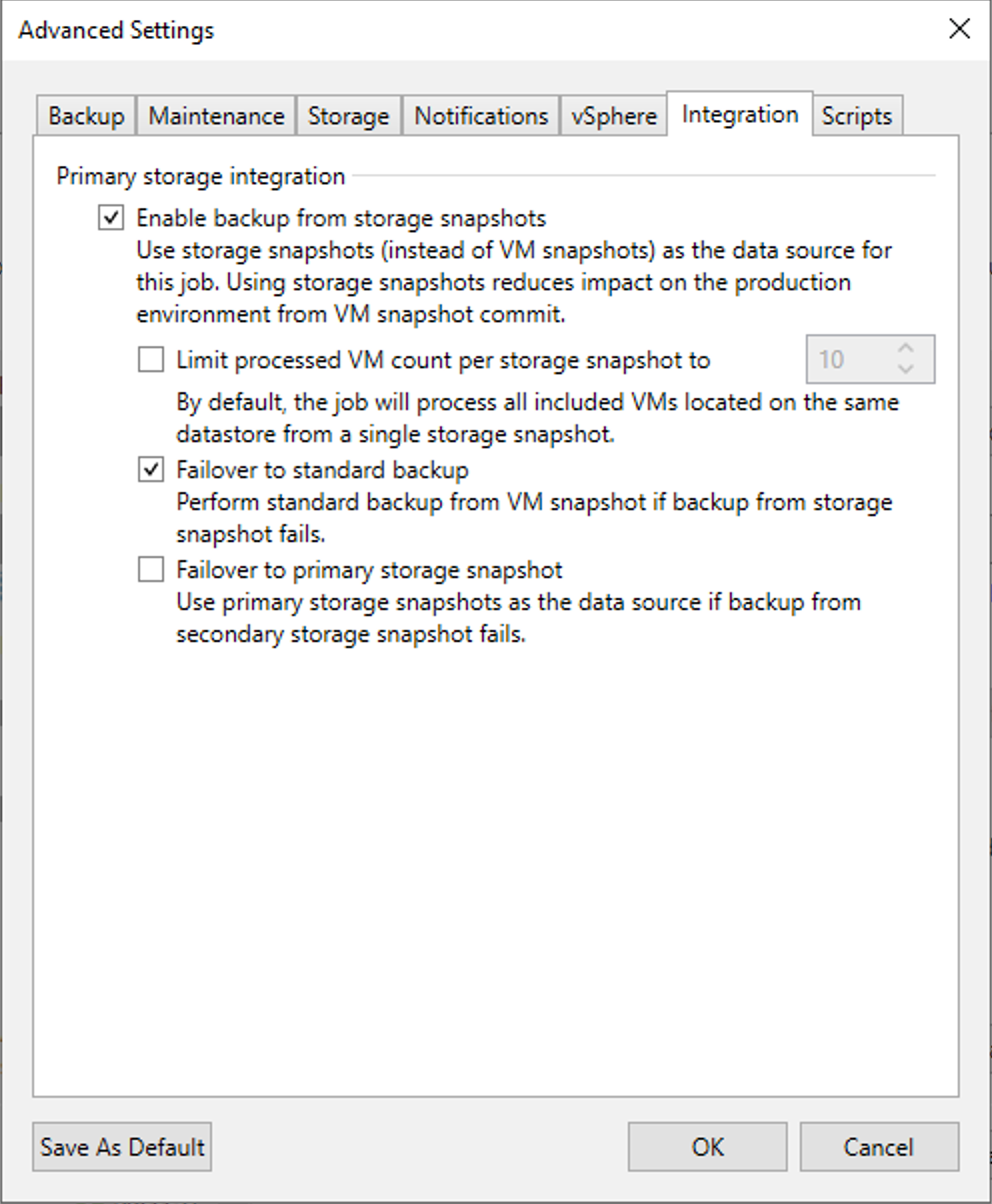

Note: Linux Proxies cannot be used as Guest Interaction Proxies, and VM Proxies do not support the VM Copy scenario. - If using Backup from Storage Snapshots, the Proxy does not need access to the Volume, but rather its snapshot or clone. To use BfSS, the Proxy Transport mode should be configured for either Automatic or Direct Storage, and the Backup Job > Storage section > Advanced button > Integration tab, should have the box for Backup From Storage Snapshots enabled.

Enable BfSS

You can reference additional requirements and limitations in the User Guide here and here.

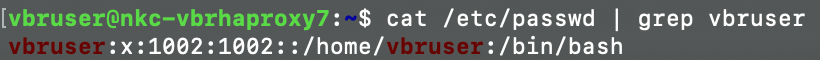

As with Linux-based Repositories, SSH and the Bash Shell are also required. You can check if the user you use to configure your Linux server is using Bash by looking in the passwd file, final item in the output:

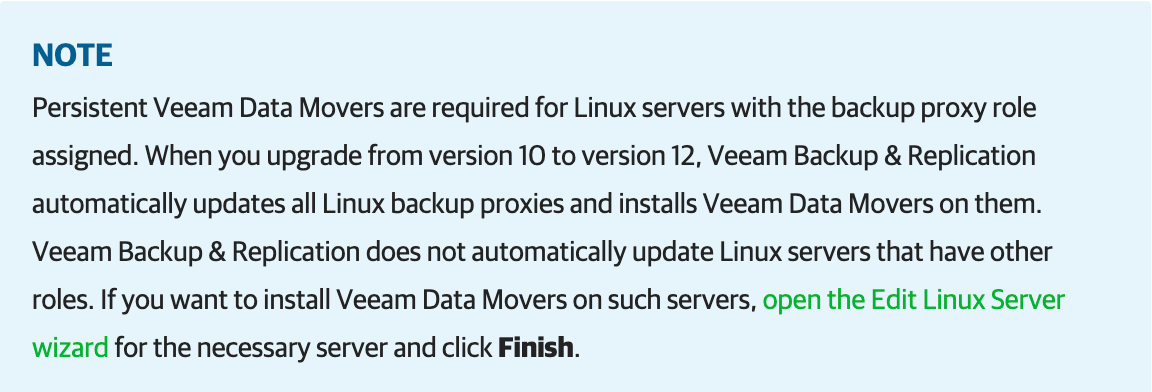

Veeam installs two services used by Proxies – the Veeam Installer Service and the Veeam Data Mover component. Although the Veeam Data Mover component can be persistent or non-persistent, for Linux Backup Proxies the Data Mover must be Persistent.

Linux Installation

As I mentioned in my Repository post, I won't go through the Linux install. I gave a few suggestions regarding gaining install experience in that post.

Linux Configuration

I will be again configuring my Linux server to connect to my storage using the iSCSI protocol. If you use another protocol, make sure to use commands for the protocol you're using, as well as the Linux distribution (Debian, Redhat, other).

After installing your OS, perform an update and upgrade to the software and packages:

To save some reading time (yay! 😂), I won't provide the details of the remaining Linux configuration steps as they are identical as what I provided in my Repository post. I'll just provide a high-level list here. For a reminder of each step's details, please refer to the Implementing Linux Repository post link above.

- Change your IQN to a more relevant name:

IQN example: iqn.2023-12.com.domain.hostname:initiator01 - If you're using a SAN, and your storage vendor has specific iscsi.conf and multipath.conf configurations, make those changes, then restart the iscsid and multipathd services to apply the change

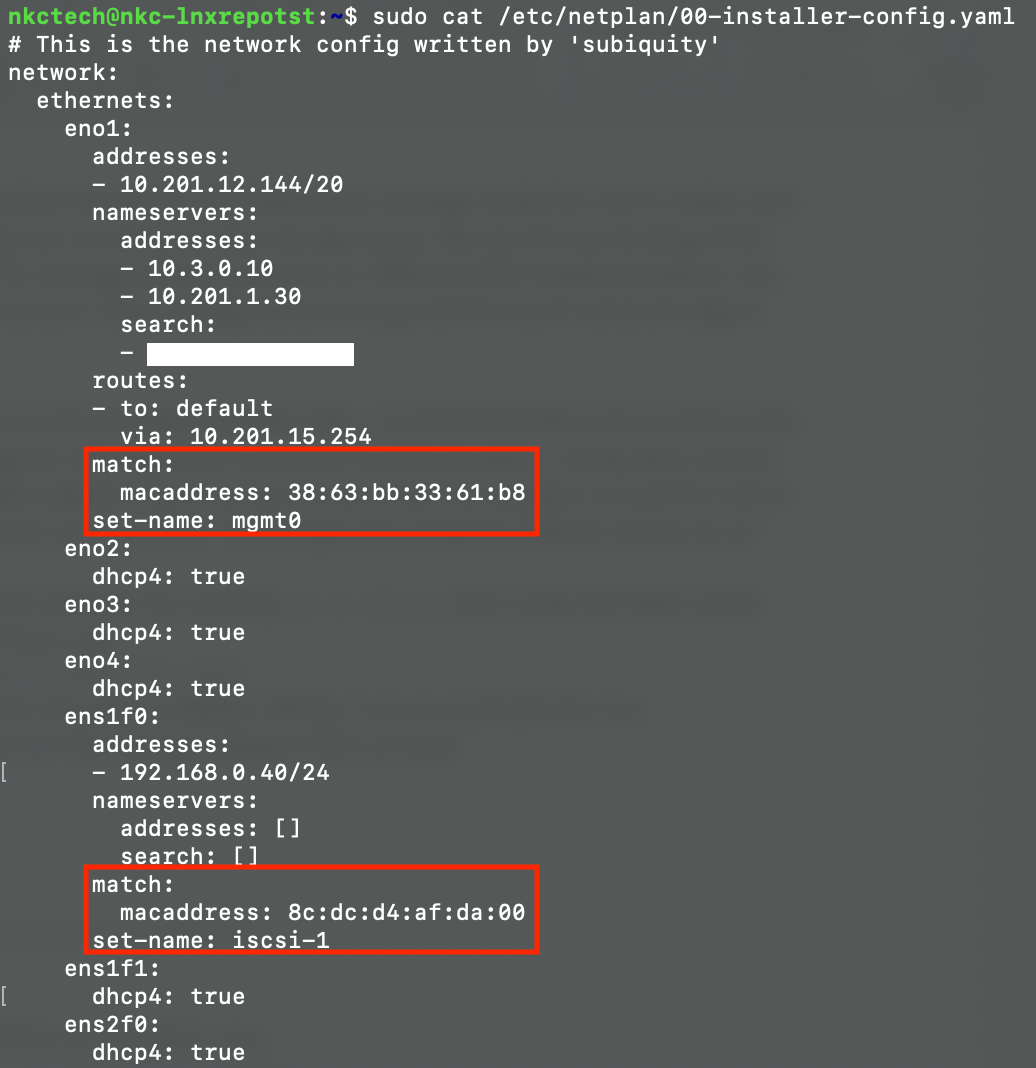

- Though not required, it is recommnded to change your adapter names based on function:

Adapter Alias/Name Change

- After changing adapter names, configure iscsiadm iface for each storage adapter used for multipathing, then restart the iscsid service. You may need to restart your server for the changes to take effect

- If using Direct Storage or Backup From Storage Snapshots, log onto your production storage array and configure your production Volumes based on the Transport method you choose to use. I provide further details on each method below

After everything is configured, you're now ready to finalize your Proxy. I'll finish this configuration section based on the Backup Transport method you're implementing…

Virtual Appliance

This is the easiest method to implement. Aside from making sure your Proxy VMs have access to source disks of your production VMs you're backing up, all you need on your Linux system is to make sure the Linux user account you use to connect to your Veeam server with is using the Bash shell, and have SSH enabled. Configuration steps 1-4 above are not needed. Also, if you use a mixed form of Proxies in your enviornment, physical and virtual, as I do, I generally configure my VM Proxies with 8 Cores and 16GB RAM. If you use all virtual Proxies, then make sure to size all your Proxy VMs according to Veeam Sizing Best Practices. I provided the BP link above.

Backup From Storage Snapshot (BfSS)

If you're using BfSS, log onto your storage array and configure access to your production Volumes with the IQN name you set earlier in the intiatorname.iscsi file. As mentioned in the Requirements section, for BfSS, the only access the Proxy server requires is access to snapshots or clones, not the Volumes. For my vendor array, the setting I use for this is to configure "Snapshot only" access to the production Volumes.

The last thing needed is to connect the Proxy server to the storage array (target):

sudo iscsiadm -m discovery -t sendtargets -p Discovery-IP-of-ArrayDirect Storage (DirectSAN)

This Transport method, I just recently learned, is a bit "clunky" with Linux. As I was researching the User Guide, and filling in the gaps with further details the Guide doesn't provide, by reading other posts on the Web, I came across this Forums post , which states at least one caveat of why not to use this method with Linux. Since v11a, and as noted in the v11a Release Notes – "Linux-based Backup Proxies configured with multipath does not work in DirectSAN". There are a couple comments in the post from users who did occasionally have multipathing work. But, with it being so unstable, it's probably best to avoid this method for now until Veeam resolves the multipathing issue.

For article completeness, and for when Veeam does resolve the multipathing issue, I will still share the configurations needed to configure DirectSAN. On your your production storage, each Volume needs ACL access with the Linux server's IQN, as is done with BfSS. But, for each LUN, "Volume only" needs configured as well. No Snapshot access is required.

On your Linux server, perform a target discovery command, as is done with BfSS. After, you then need to do a target "login" operation:

sudo iscsiadm -m node -lVeeam Server

After you've finished configuring your Linux server for the Transport method you are using, you then need to add your Linux server as a managed server in Veeam, then go through the Add Proxy > VMware Backup Proxy process. Once you perform those 2 steps, you can then either manually assign this specific Proxy to your Jobs as needed, or allow Veeam to choose for you via the Automatic setting.

Conclusion

And that's all there is to implementing Linux Proxies into your Veeam Backup environment. Told you it wasn't so bad 😉 You now have a fully Linux-integrated Veeam Backup & Replication environment!..well, at least fully-integrated Linux Repositories and Proxies. 🙂