Introduction

In a previous post, I covered the SOBR Capacity tier Calculations and Considerations. Today, I will be exploring the Archive tier and discuss how to estimate Archive storage requirements, expected API calls and Proxy Appliance uptime.

Before we jump into more details, remember that the Archive tier only works with “Amazon S3 Glacier”, “Amazon S3 Glacier Deep Archive” and “Microsoft Azure Archive Storage” in Veeam v11.

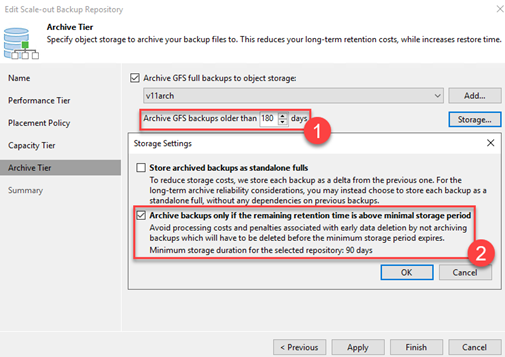

- In the SOBR configuration, under Archive Tier, you define after how many days (1) you want to free up space from your more expensive capacity tier by offloading to the typically** less expensive Archive Tier.

**Keep in mind that the Archive tier is meant to store data for a long time (i.e. more than 1 year, up to 7 or more years). It is not meant for active day-to-day restore operations.

You will find that storing data in the Archive Tier for less than 1 year won’t make much economic sense (at least at the time of this write-up!).

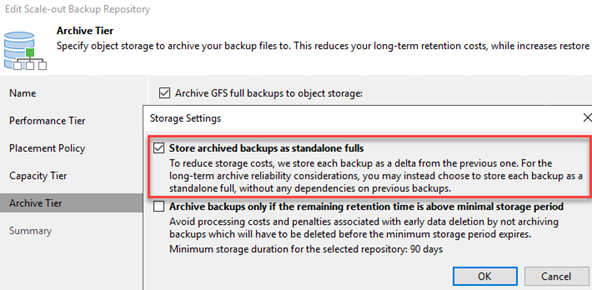

- It is also very important to remember that exiting an Archive Tier “too early” is akin to breaking your lease: You are going to lose that rent deposit! To avoid the “early deletion fees”**, I enable what I call the “Veeam Archive Tier Safety Net” (2).

**For reference see the row labeled “Minimum storage duration charge” for AWS S3 storage classes and the section titled “Cool and Archive early deletion” for Azure Blob Storage.

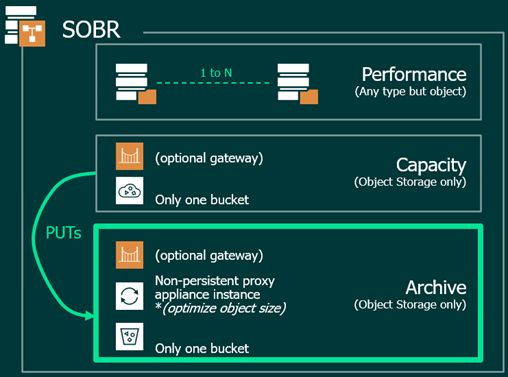

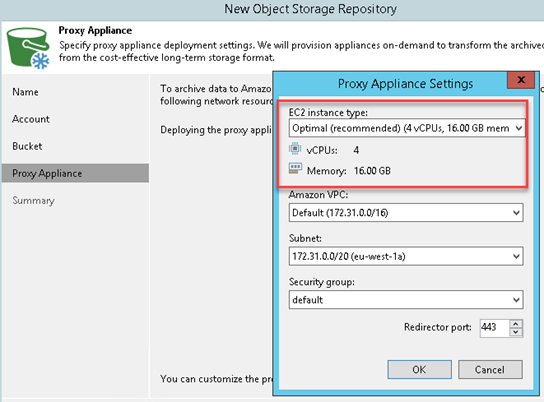

- When an Archiving Job runs, a non-persistent proxy appliance is spun up to optimize the object size before storing into the Archive tier. This reduces the API calls made to the Archive Tier as well as the number of objects stored, ultimately optimizing both performance and costs.

How to estimate Archive storage requirements?

Let’s consider the following example:

- 100TB source data

- 10% daily change rate

- 50% data reduction

- Retention 14d 5w 12m 7y

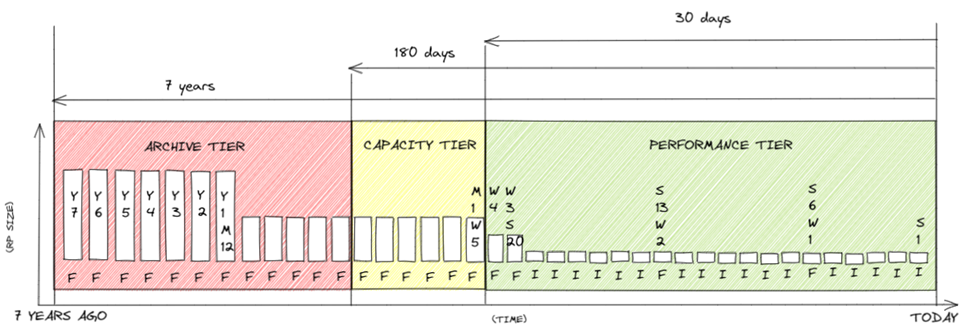

- Capacity Tier move after 30d

- Archive after 180d

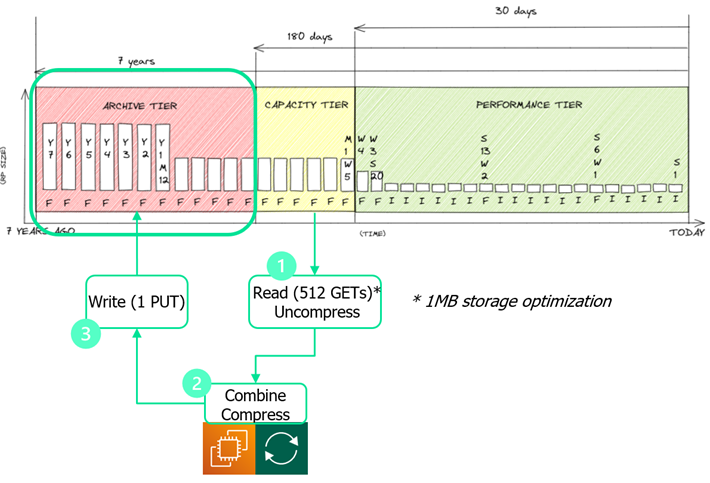

I have used https://rps.dewin.me/rpc because it allows me to visualize what goes in the Archive tier (i.e. anything older than 180 days up to 7 years – in our example).

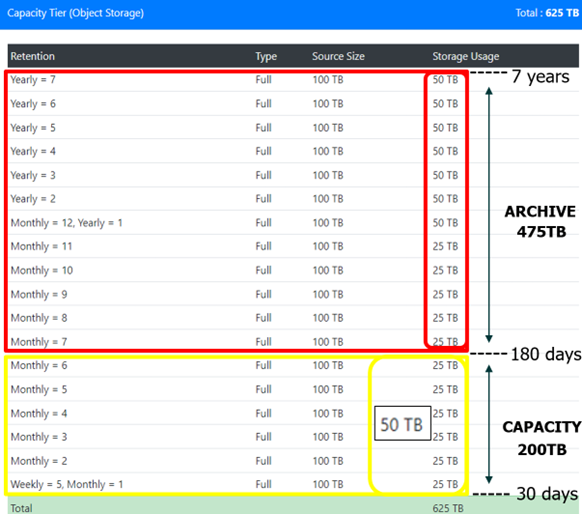

I leveraged the Capacity Tier output and simply selected and summed up the restore points that are older than 180 days to estimate my Archive Tier Storage requirements.

Note that if you’ve opted to store the Archive backups as standalone fulls then you must sum up all restore points as fulls (i.e. 50TB in our example)

How to estimate API calls?

The temporary proxy appliance needs to #1 read (GETs) from the Capacity Tier and uncompress these objects, #2 combine data and re-compress and #3 write (PUTs) to the Archive Tier.

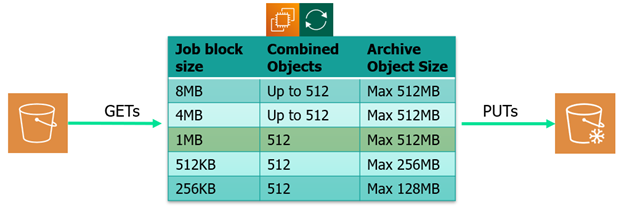

The job storage optimization (job block size) is what drives the number of combined capacity tier objects (see table below) but in the case of “public cloud”, it should always be 1MB block.

When you estimate the number of API calls, you must consider the amount of uncompressed data that must move from the Capacity Tier into the Archive Tier.

You can use the formula below to get your Archive PUT count (if using 1MB block size):

“Archive Tier PUTs” = “Capacity Tier PUTs” divided by “512”

Let’s use an example to illustrate the API calls count:

- You need to transfer 50TB worth Capacity Tier objects into the Archive Tier

- 50TB uncompressed is 100TB (using Veeam’s typical 2:1 data reduction)

- Using 1MB “job block size” the proxy will need to read (GET) 104,857,600 objects from the Capacity Tier.

- The proxy will write (PUT) 204,800 objects to the Archive Tier

What is the Proxy Appliance’s uptime?

An Amazon EC2 proxy appliance observed average transfer rate is about 100 MB/s while the Azure proxy appliance is slightly slower at around 80 MB/s.

Of course, these transfer rate may vary depending on the proxy appliance’s size you have selected but the above number should suffice to get a decent uptime estimate.

Let’s use the example of transferring 50TB worth of objects from an AWS S3 Capacity Tier into the Archive Tier:

50TB divided by 100MB/s = 145 hours (a little more than 6 days)

Side Note: To avoid data transfer fees, use endpoints to keep the proxy appliance traffic internal (maybe we’ll cover this in a future post)

Summary

In this post, I showed you how to estimate Archive Storage requirements, get a sense of your API calls and evaluate your Proxy Appliance uptime.

Hopefully this post will help you estimate costs when leveraging public cloud cost calculators.