We have Kasten K10 on installed via operator on multiple clusters. All of the K10 instances seem to have excessive amounts of helm revision when viewed with helm history k10

Is this normal behavior? We seem to be getting ~200 revisions per hour.

Kasten K10 Operator install in Openshift has excessive amount of helm revisions on K10 instance?

Hello

I would say this is not normal. Would it be possible to show a example of what you are seeing.

Thanks

Emmanuel

Hi

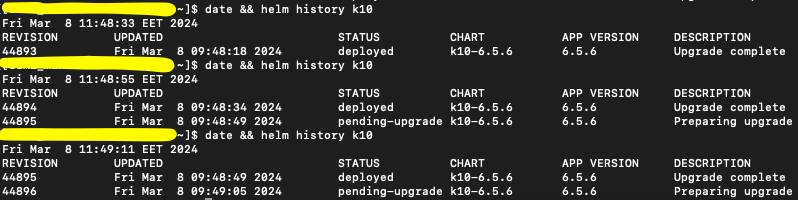

In helm history it looks like this:

I have not been able to get any further info on why it does an upgrade.

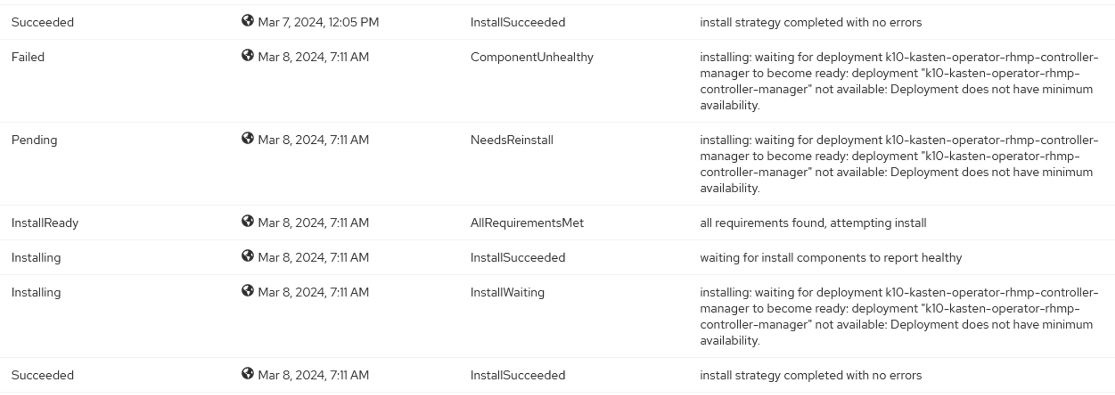

In the operator side of OpenShift GUI I get these kind of messages repeatedly, but nowhere near as frequently as the helm revision goes up:

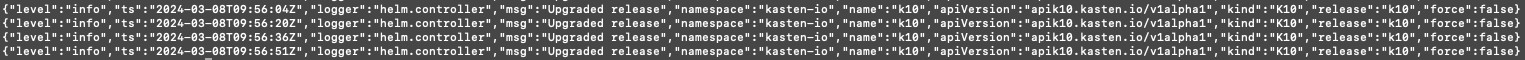

Now looking at the logs of k10-kasten-operator-rhmp-controller-manager has a lot of these “Upgraded release” messages:

So most likely the controller manager is behind all this, I just have no idea why.

BR

Mikko

Hello

Do you see K10 pods restarting over and over?

Thanks

Emmanuel

That’s the weird thing, they don’t restart.

BR

Mikko

Hello

Are you using Openshift Operator?

Thanks

Emmanuel

Hi

Yes:

We have a newer version installed as well with the free license just to test this issue, and that is version 6.5.5 if I remember correctly. The issue appears there as well.

BR

Mikko

EDIT: It was 6.5.6 as seen in the previous screen captures.

Hello

So, You shouldnt list Helm History as they dont have much to do with each other. I would recommend you do not have Helm K10 installed with Operator K10. Lastly I would take a look at the k10-kasten-operator-rhmp-controller-manager logs. Both Helm and OpenShift Operator are two different Operators.

Thanks

Emmanuel

Hi

I think that Kasten K10 operator always deploys the K10 instance with helm. I only have k10-kasten-operator-rhmp.kasten-io installed and when you deploy a K10 instance from there (through gui or cli) it always uses helm to deploy the K10 custom resource.

BR

Mikko

Hello

That is correct both are helm based, but please if you have installed K10 operator (OpenShift), proceed deploying K10 instance from the operator on OCP console, not from cli (helm) that will have unexpected behaviours as you probably is facing.

If you have done that, I would recommend uninstalling K10 and re-install using one of the methods Operator or via helm.

Hope it helps

FRubens

Hi

Ah, sorry for the confusion! By CLI I meant via manifests. All in all what I have done is install K10 from the operator, configured all the settings and policies to my liking and then converted this to an ArgoCD app which uses the same kind of manifests that are deployed from the operator and K10 itself.

But the issue is still present even if I just install the operator on a fresh cluster and deploy K10 from the operator via Openshift GUI without doing any of the ArgoCD stuff.

BR

Mikko

Hi All,

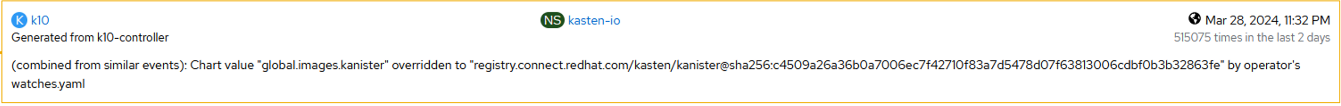

We are also getting a whole lot of these events on the k10 instance:

I could not find this “watches.yaml” of the operator. Anyone got an idea how to deal with these?

BR

Mikko

Hello

Alright so one we do not directly support ArgoCD approaches, but based on the error it looks to be that K10-controller is conflicting when attempting to create a kanister container. This looks to be that its conflicting on where the image is puling from and the operator is fixing this in the process.

Thanks

Emmanuel

Comment

Enter your username or e-mail address. We'll send you an e-mail with instructions to reset your password.