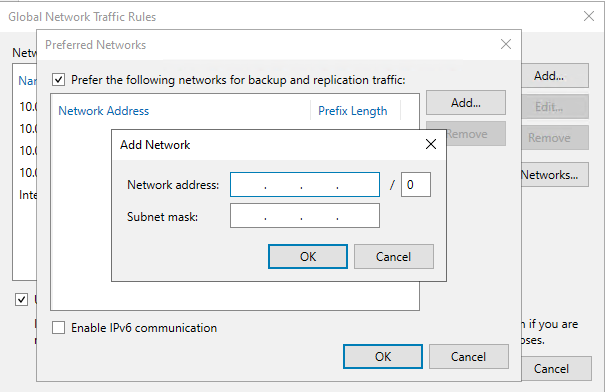

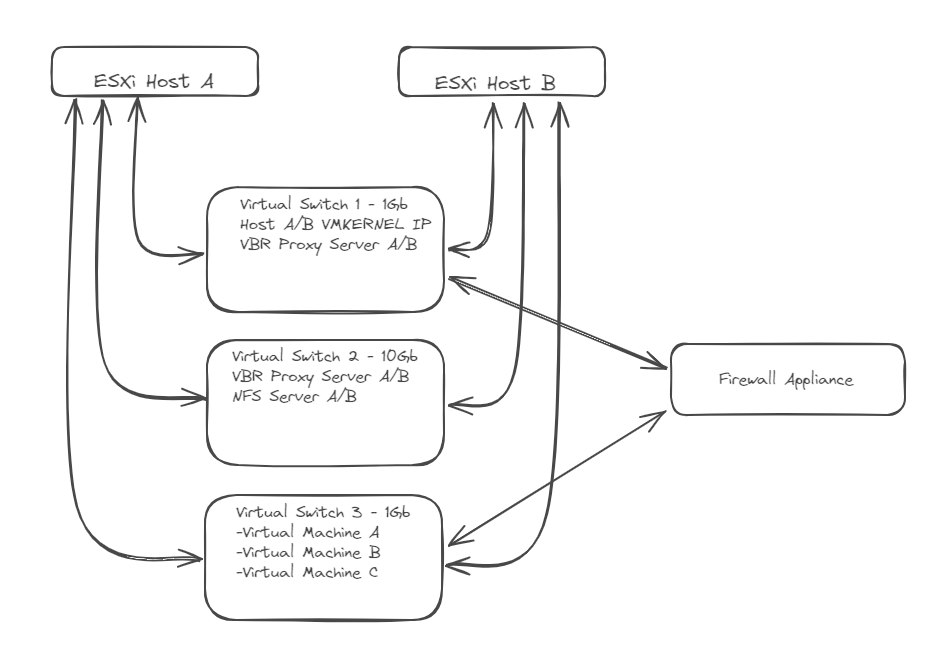

I have a 2 node ESXi 7.0.3 host setup and running Veeam v12 to backup 5 VMs to a NFS share (all production VMs exist on node A, NFS share is BSD VM on node B). The thought is that currently all production VMs can run on a single host and a backup copy of those can be created in a VM on host B. Each server has qty 2 10Gb network interfaces and I have connected both NICs in a port group and virtual switch along with creating a vmkernel interface on both hosts referencing the correct port group. After reconfiguring VMWare, I rescanned both ESXi hosts in Veeam and started the backup job. I am not convinced that the process is using the new 10Gb link between the 2 hosts for the transport process. What am I missing?

Best answer by beenydu

View original