After watching last week’s Community Recap, I realized that I missed a great blog posting from

The magic lies in some S3 IAM policy work in place to prevent buckets from being visible and accessible to the wrong tenants. When I was setting this up, there wasn’t much information for doing exactly and S3 policies were completely new to me. Using Wasabi’s and Veeam’s instructions in the links and the end of this post didn’t work for me out of the box, so after asking around in the Veeam Community, R&D Forums and Veeam Subreddit and some experimentation, I was lead to the answers I needed. I hope that posting this will help to alleviate that pain for someone else in the future.

Also, I realize that this post gets a bit salesy in parts for Wasabi, so I wanted to just add an early disclaimer, I have no relation to Wasabi other than being a pretty happy partner overall, and my clients are pretty happy as well!

Setting up Wasabi - Using WACM

It should be noted up front that Wasabi has a utility for partners to utilize. The Wasabi Account Control Manager (or WACM) allows you to create multiple sub-accounts for your tenants, under which you can create buckets as needed. The advantage of this should be that the sub accounts should be able to be restricted from accessing each other and their respective buckets and should also make billing easier and I believe you can also set a limit on how much space each sub-account can utilize. Your tenants may also be able to log into their console with their sub-accounts to view their data. The disadvantage of WACM is that it costs extra - but it’s not a lot. When I last spoke to our account manager about this, it was a $100 per month fee to have access to the WACM. Those are costs that are probably baked into your managed services or backup as a service agreement, but if they’re not, that’s going to be a small monthly expense to you. Wasabi’s recommendation was to setup a single account with multiple buckets which I’ll outline below. I don’t have any experience with the WACM, so I can’t give much advice for using this tool, but from what I’ve seen of it, it seems like it would really be the way to go if you know that it’s going to work out for you!

Setting up Wasabi - The Other Way (no WACM)

If you’re just starting out in object storage, or are a smaller MSP, it may take some extra margin to offset the extra cost of the WACM noted above. As such, when starting out, you may need to instead do things in a more cost-effect manor. Wasabi’s recommendation to me was to setup an account using their standard Cloud Storage Console and then create multiple buckets, user account and S3 policies, one for each tenant as needed to prevent the tenants from accessing each others buckets. With that said, because the buckets names are related to the client names in my case, I didn’t want the buckets to even be visible to the other tenants so my policy is setup to be pretty much as restrictive as possible.

WITH THAT SAID, when I last spoke to our account manager/support, there was no migration path from using the individual account with multiple buckets to the WACM console, so think long and hard about your plan for how you want to host this data. If you expect fast growth and many tenants, it may be worth taking the WACM hit early on rather than being stuck without it. That particular issue may be somewhat mitigated however with VBR v12 once released due to their new VeeaMover utility which will allow you to move data between buckets, and I suspect wouldn’t have much issue migrating data from an individual account bucket to a sub-account bucket in the WACM. Of course, when you’re using immutability, data within your immutability protected time will still be flagged as immutable and will not be able to be deleted until that time has passed, so there may be some duplicate of data between the buckets and some extra charges when migrating, but again, your mileage may vary.

ALSO OF NOTE: This method only works, as of the time of writing, in the later builds of Veeam 11a if you don’t want VBR to be able to see all of the buckets in your account. Older builds of Veeam 11 and 10 cannot add buckets if the S3 policies prevents all of the buckets from being listed (S3:ListAllBuckets), because it will error, but Veeam updated VBR’s code in later builds to allow it to proceed in the event that it can’t list all buckets and you can supply a bucket name instead of just failing. Plus, I’m still not sure why you wouldn’t want to be running 11a anyway, right?

FINAL NOTE: You cannot set limits per-bucket. Meaning, if your client is prepaying for a specified amount of space for their bucket and you aren’t billing every month based on usage, you can’t actually specify a limit on the bucket. You can set a soft-limit on the repository in VBR, but the customer could theoretically change that limit, so you’ll want to keep an eye on bucket utilization….something you should probably be doing anyway.

Creating your Wasabi Account

If you don’t already have a Wasabi account setup, you can sign up for a free 30 day trial. Note that the trial does have a 1TB limitation, but that limitation can be removed by placing your credit card information on file. Also note that this will set you up for “pay-as-you-go” pricing. There are price breaks if you sign up for their “Reserved Capacity Storage” or RCS plans which really break even and start saving you some money when you are approaching 40-50TB of utilization in my case. With RCS plans, you can also change your billing process to be invoiced rather than to automatically charge your credit card on file every month. Also note that that with PAYG plans, there is a minimum of 90 days of data retention needed to prevent charges for deleting your data too early, whereas the RCS plans drop that to 30 days. I heard also rumblings in the grapevine that when you are storing Veeam data, support can also reduce that 90-day requirement to 30 days while still on the PAYG plans and I had no difficulties in them making that billing change for me. It should also be noted that this limitation is an account-wide setting and not a per-bucket setting, so if you have clients that want to keep data for only up to 30 days before deleting, you’ll want to have that reduced, but many clients will want to keep much more data in their buckets, especially considering that object storage is relatively inexpensive. After creating your account, make sure that you also setup MFA of course since we’re talking about multiple client’s data here. Don’t become a statistic.

Creating your Buckets

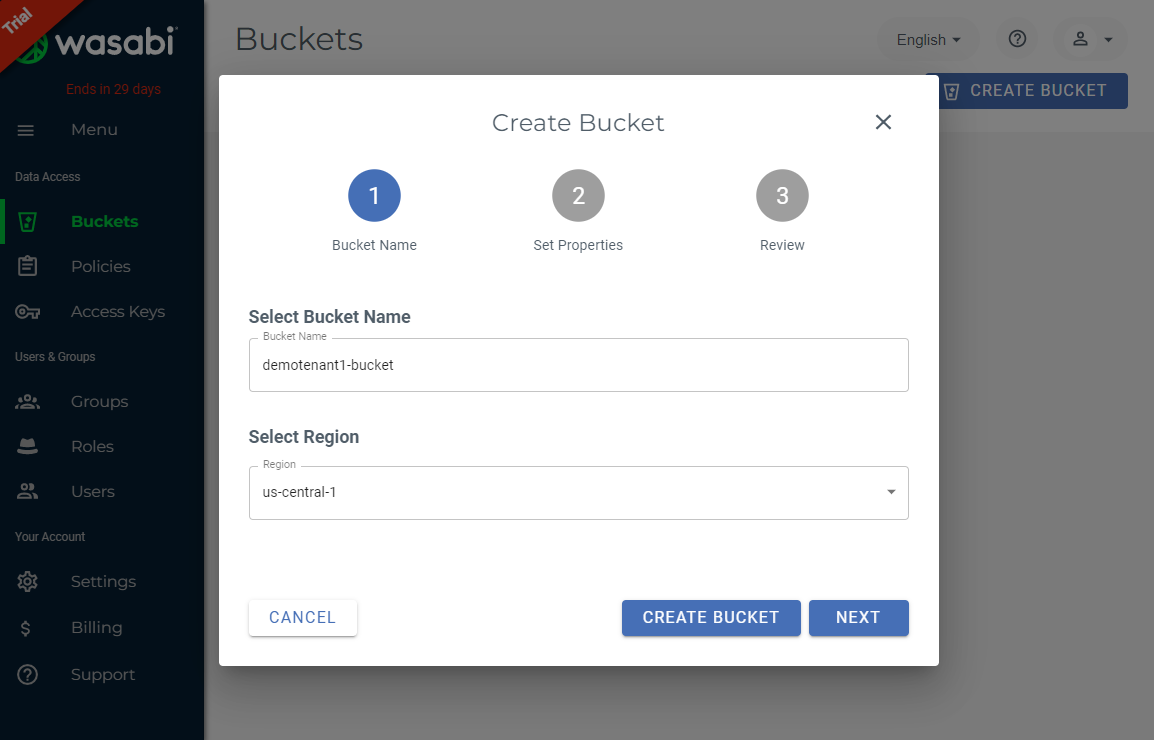

Now it’s time for the fun to begin. When you first log in, you’re prompted to create your first bucket. In this case, I’m going to create two buckets, demotenant1-bucket and demotenant2-bucket. Note that bucket names must be unique globally, as in, within all of Wasabi, not just your account. You’ll also want to select your datacenter region. In my case, I’m in Nebraska, and so are most of my clients, so I’m going to select the us-central-1 region which is in Plano, Texas. You’ll typically want to select the region closest to your client for best performance, but not too close. I have one client that has a second datacenter near Portland, Oregon, so while the us-west-1 region is closest, it is uncomfortably close since the data is being stored for disaster recovery and we ended up sending their data to Plano as well just to be geographically dispersed.

Once you’ve created your bucket name and selected the region, click next. Note the exact bucket name as you’ll need this in the next steps when you create your IAM policy.

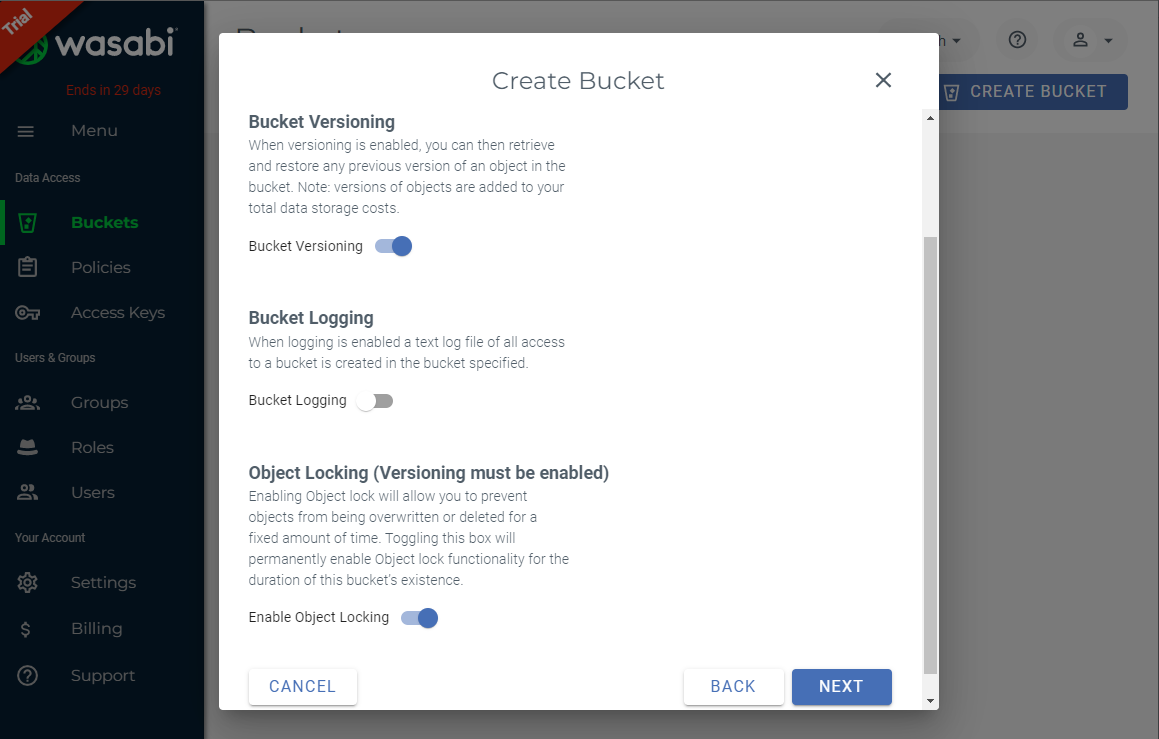

Since we’re creating an immutable repository, we need to enable bucket versioning and object locking, so slide those options on and click next.

Confirm your bucket settings and click “Create Bucket”. Repeat as needed until your buckets are created.

Creating User Access Separation Policies (Bucket Level)

This is where the magic happens and where I found the most difficulty. An IAM policy must be created for each user/bucket. In the next section, we’ll create a user account that will be assigned this policy granting them access to their associated bucket, and restricting access to any other buckets.

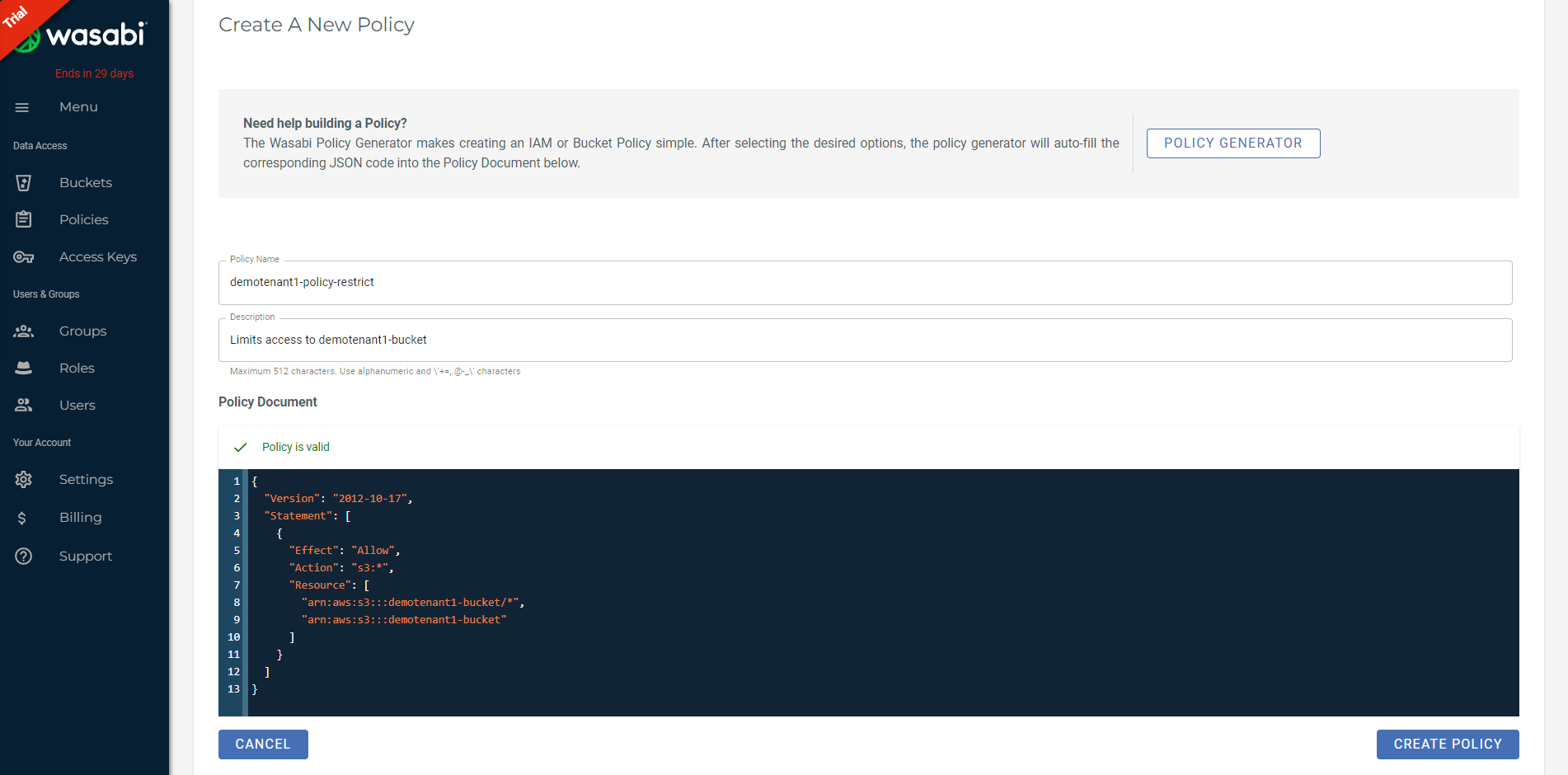

Select the Policies tab and click Create Policy. You’ll need to supply a Policy Name. In my case, I’m going to supply a similar name for clarity, and also note that this is to restrict bucket access, so I’m calling it demotenant1-policy-restrict. In the Policy Document field, I’m using the below code, but of course you’ll want to substitute in your own bucket names under the resource property. Note that this is listed twice, once as the bucket itself, and once for the objects inside of the bucket.

To understand what we’re doing, we’re allowing the full S3 API command-set access to a specific bucket resource for the user account that will have this policy assigned. Veeam and Wasabi’s instructions actually call out many specific S3 Actions that can be executed, but I’ve run into issues with this actually working - as in the policy is valid and grants access, but it doesn’t restrict to the client-specific buckets only. I’ve also tried specific commands that allow access to allow the S3ListOwnBuckets command so that Veeam would in theory be able to see the buckets assigned to this policy and restrict access to any other buckets, but this too failed for me.

Once you have your policy fields filled in, select Create Policy.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": "s3:*",

"Resource": [

"arn:aws:s3:::demotenant1-bucket/*",

"arn:aws:s3:::demotenant1-bucket"

]

}

]

}

Creating Your User Accounts

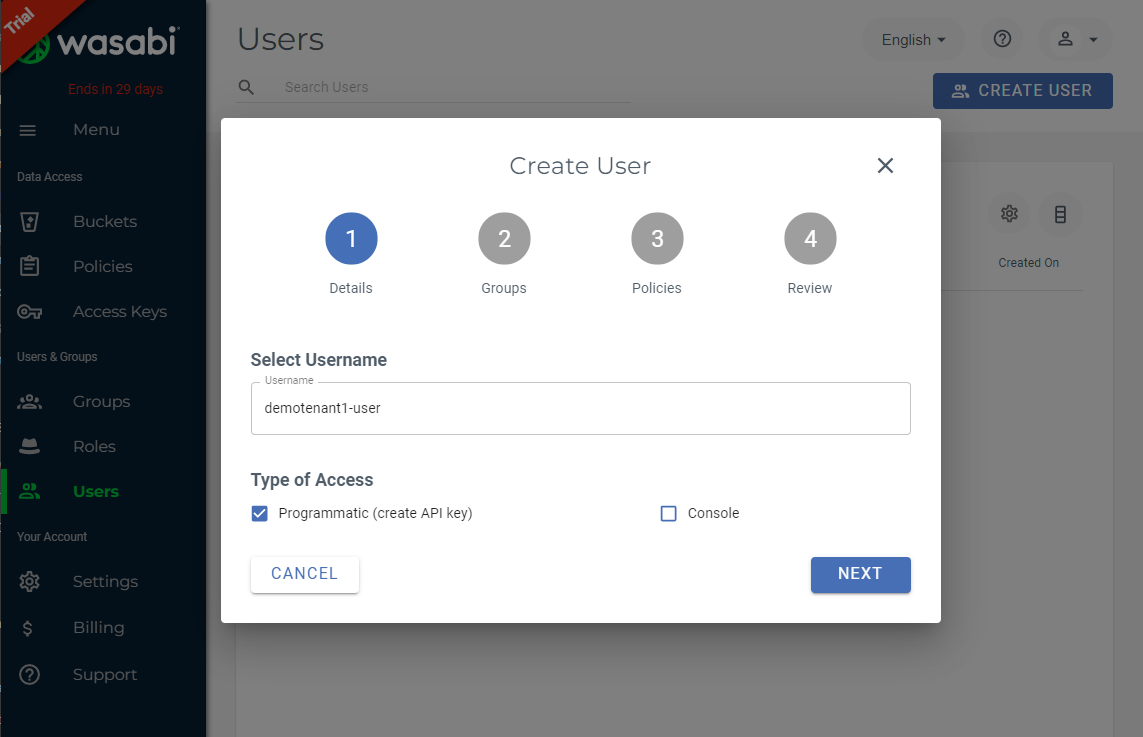

Next, you’ll want to create your users. The user account will create a login and API access key and secret that Veeam will use to access the Wasabi bucket. Navigate to the Users tab and select “Create User”. Supply an appropriate username and select “Programmatic (create API key)” for the Type of Access, and then click Next.

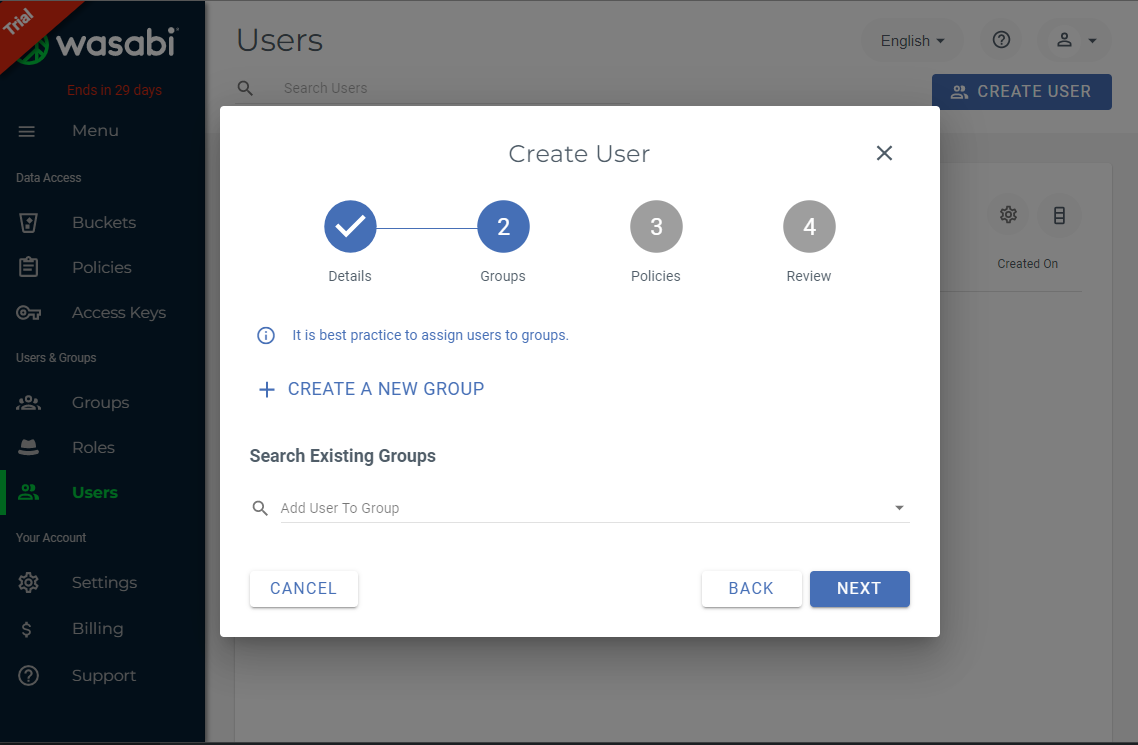

If you desire to have your users in groups, you can do so here, but that’s not necessary in my case so I’m going to select Next.

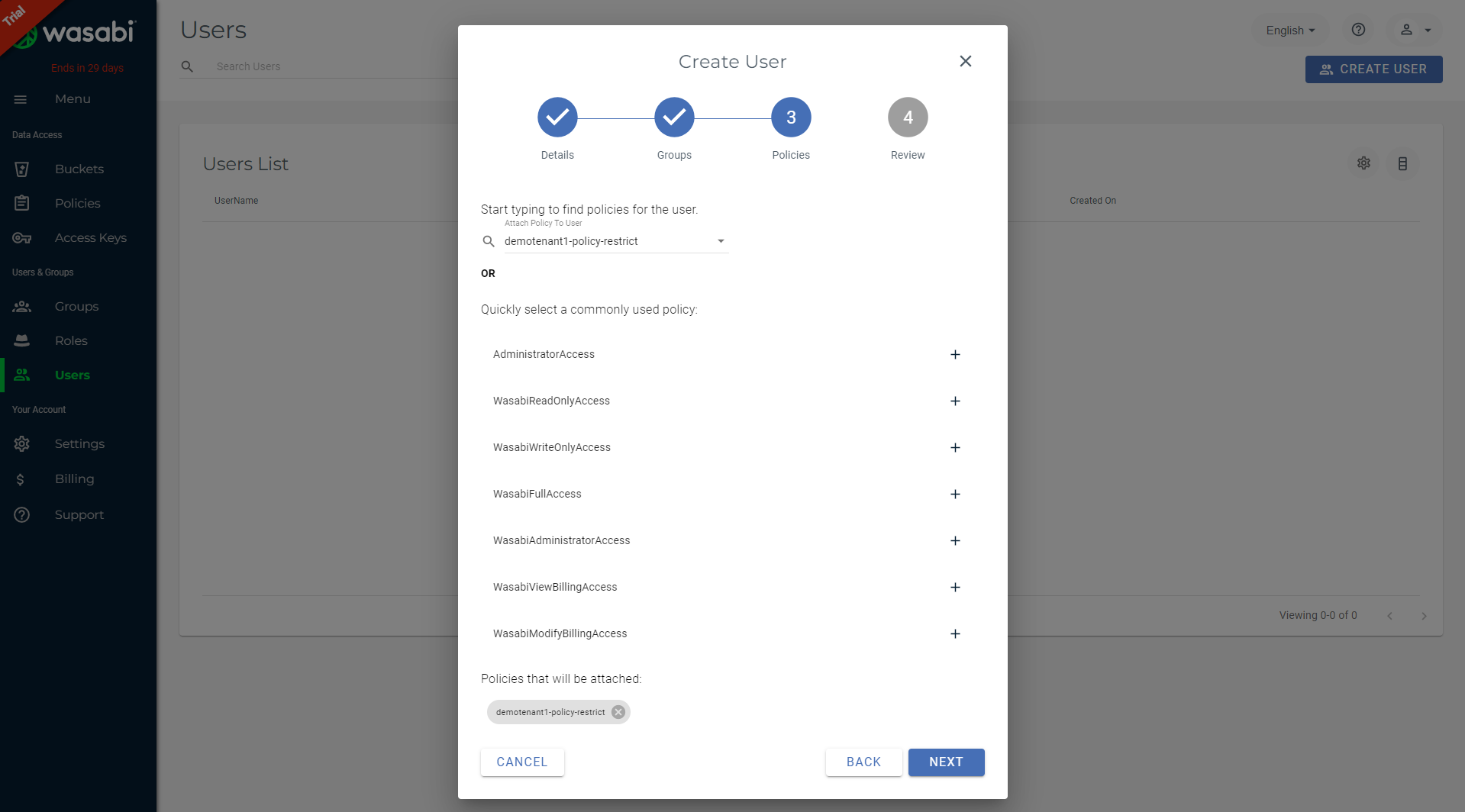

Now you’ll want to attach the user account to the policy you created for the associated tenant from the drop-down menu.

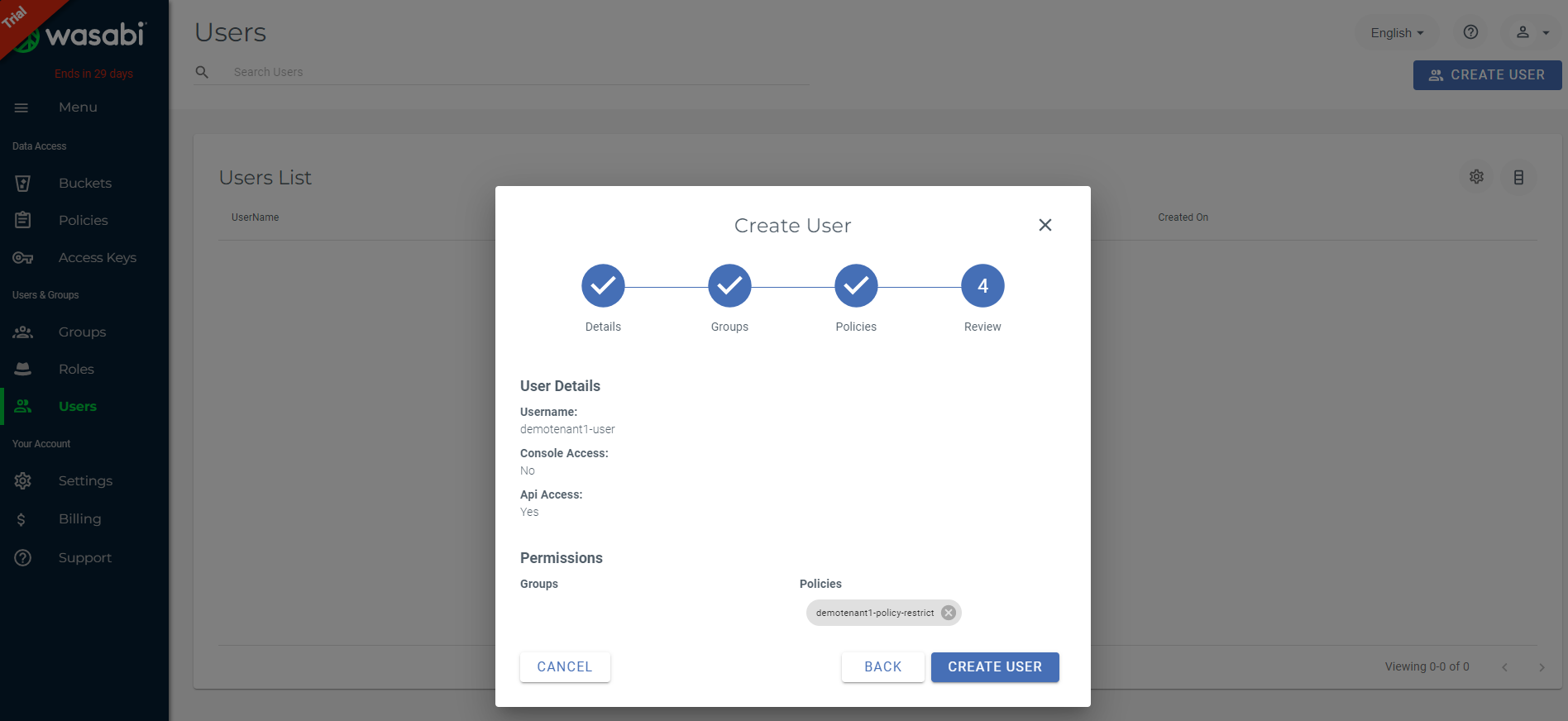

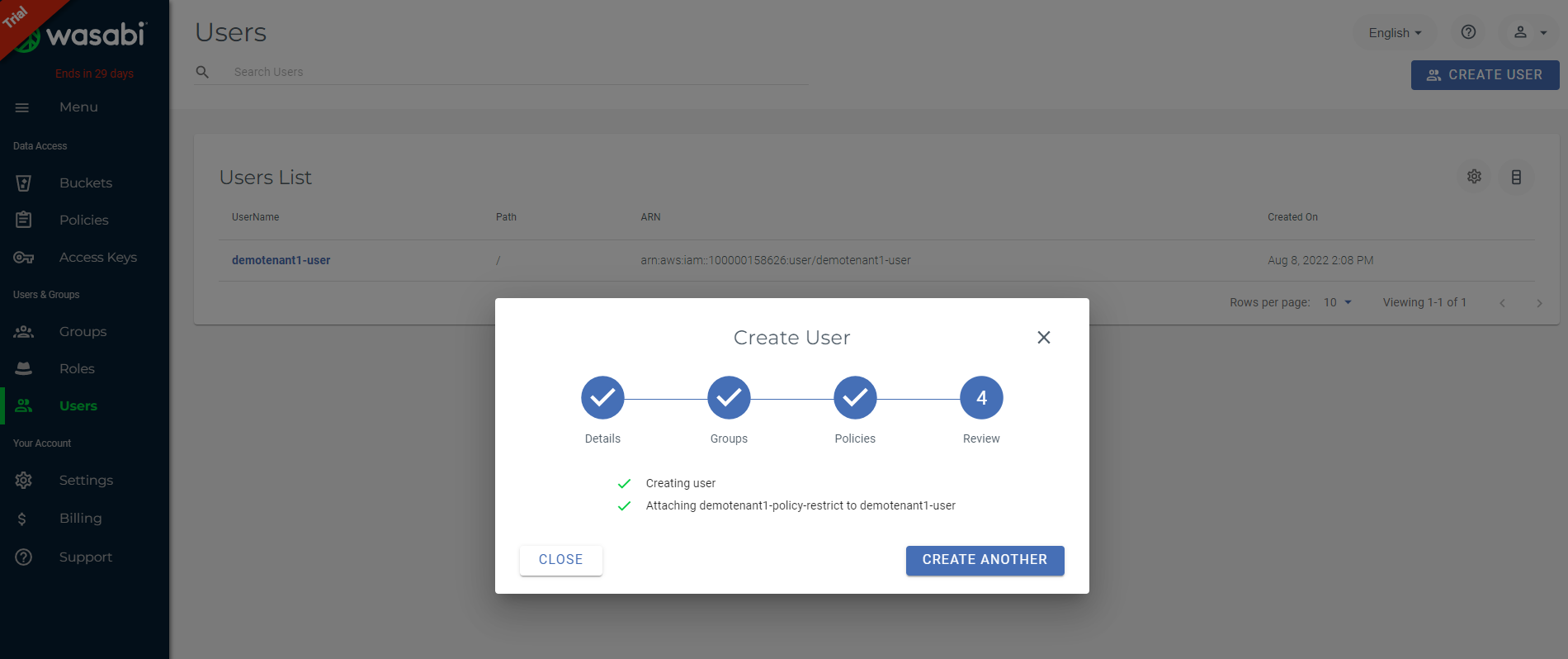

Confirm your user settings and click Create User.

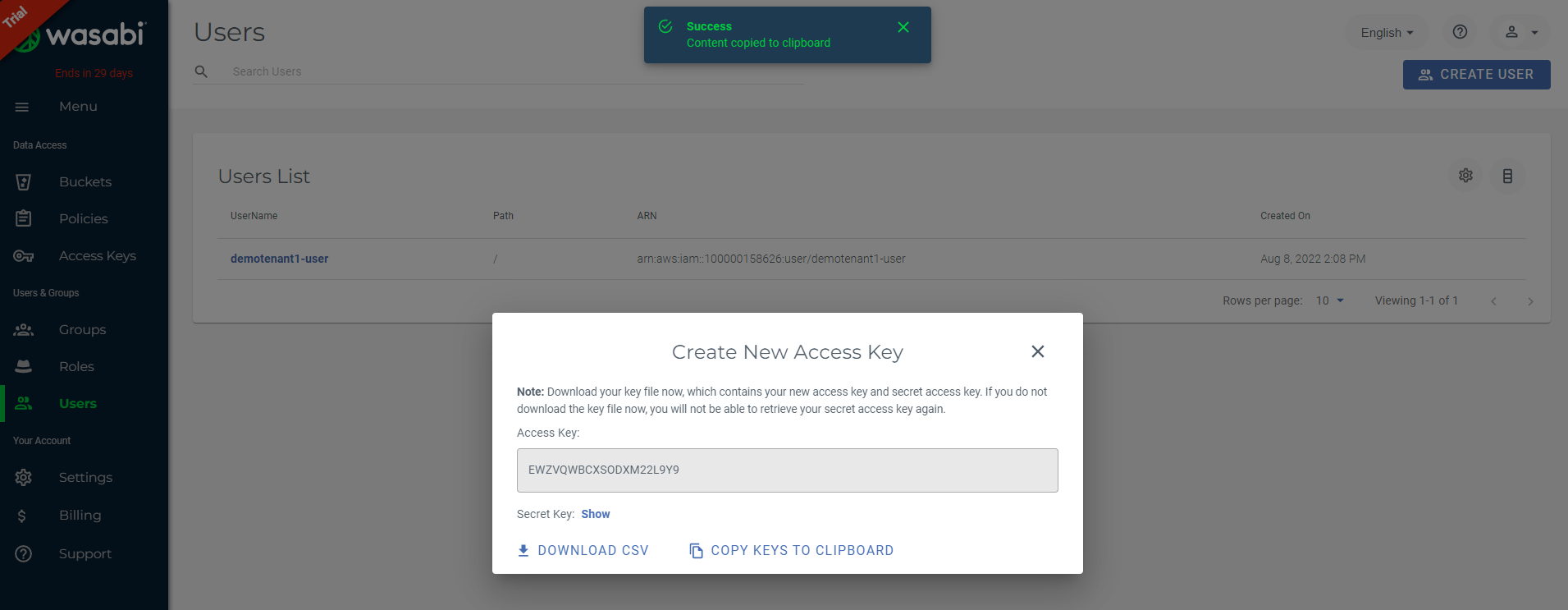

After you create your user, the console will confirm the user was created successfully briefly before displaying the API Access and Secret Keys. Keep tabs on this as you can’t display the secrete key a second time, though if you do, you can delete this key and create a new key under the Access Keys tab. You can download the keys as a CSV or copy them to your clipboard for safe keeping or simply display them on screen. You’ll need these keys to add your new bucket to VBR.

Adding Your Bucket to VBR

Finally, all of the prep work is done. Time to add the bucket. Fingers crossed!

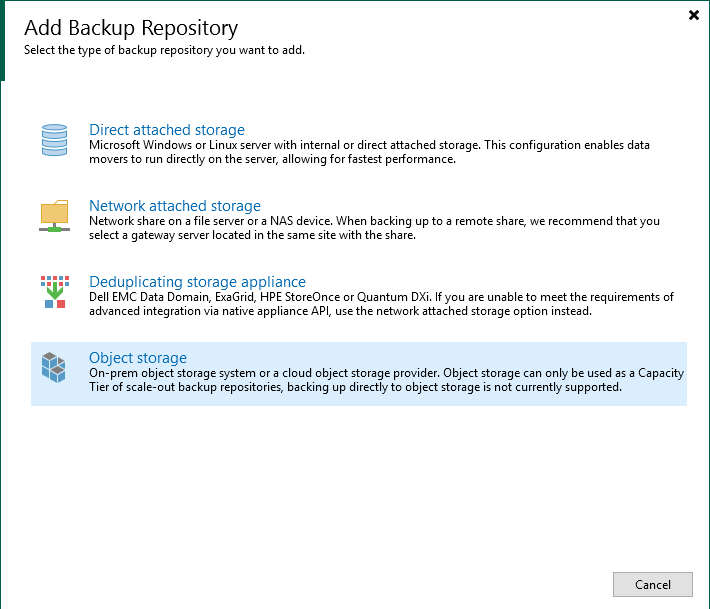

Open up VBR and browse out to Backup Infrastructure > Backup Repositories. Select Add Repository to start the new repository Wizard. Select Object storage as the repository type.

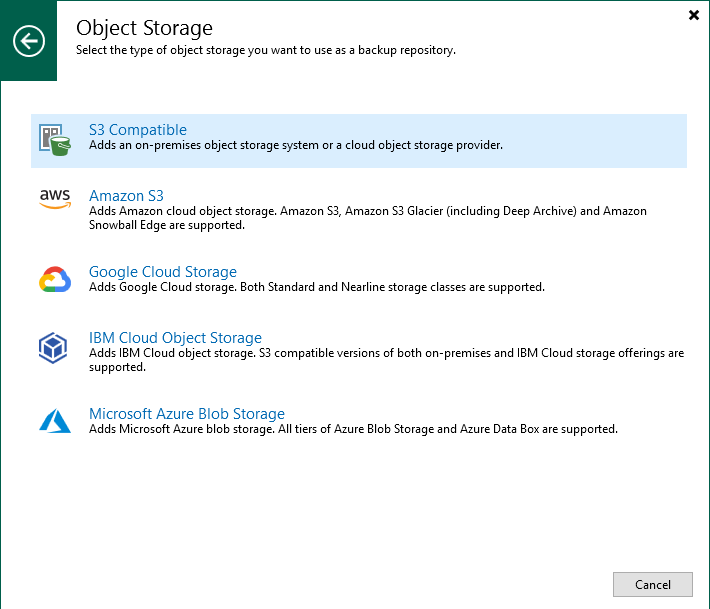

Since we’re using Wasabi, we’ll need to select the S3 Compatible object storage type.

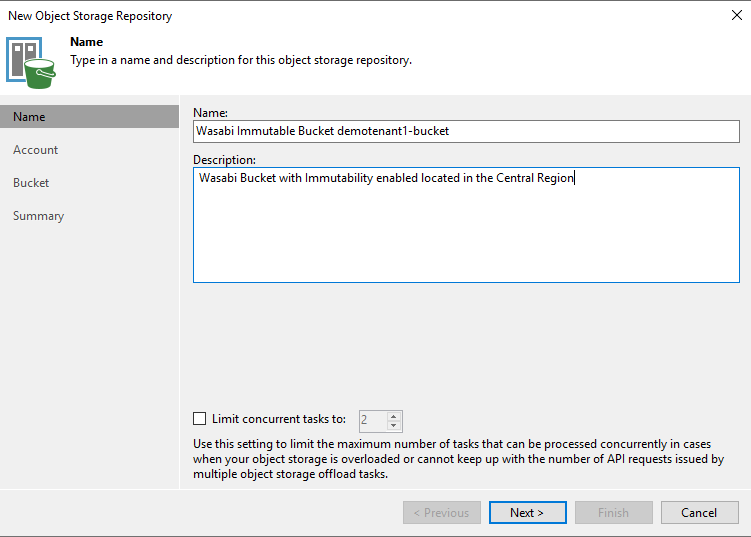

Supply an appropriate name and description for your repository. Select your concurrent task limit if desired and then click next.

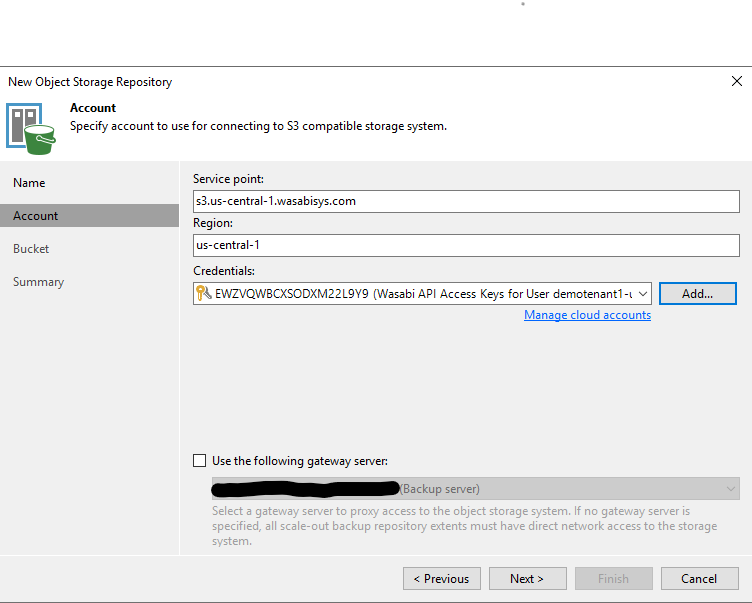

Next we will need to supply the service point access URL. Wasabi’s list of regions and URLs are available here. In our case, our bucket resides in the US Central region, so we’ll supply s3.us-central-1.wasabisys.com as the service URL and update the region name. Since we probably haven’t added our Wasabi credentials yet, we’ll need to click Add so that we can supply our API Keys that were given when creating our user account.

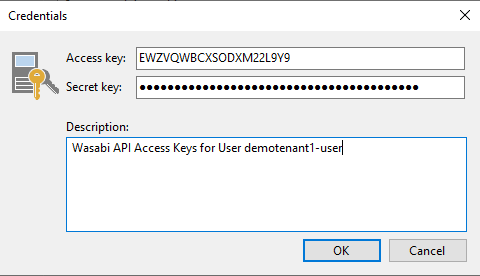

Supply the API Keys for the associated user along with a good description and click OK. If you have a specific gateway server you want to use, select it as well and click Next.

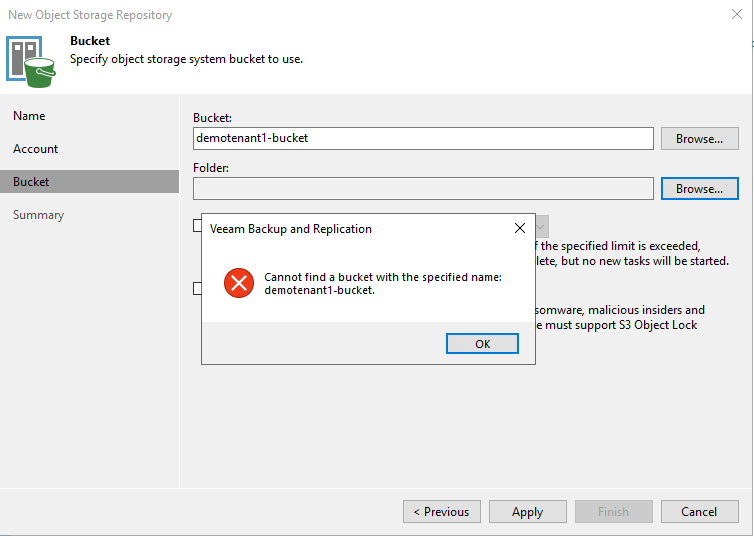

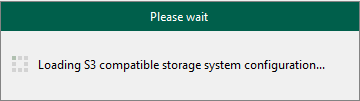

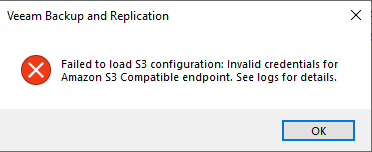

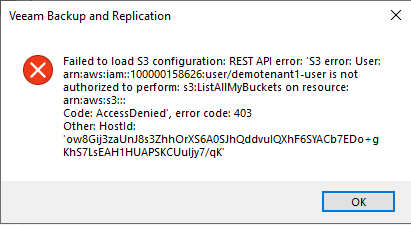

This is where VBR is going to connect to Wasabi and attempt to list the buckets it has access to. If you receive an error, you’ll want to confirm that your policy settings, access keys, service URL and region name are correct. (yes, mine errored out when creating this document - why? Remember that note I had about using an outdated version of VBR? Yeah, turns out my lab environment was outdated but I though I had updated it).

Note that if you do have an issue with connecting, VBR does seem to cache some of this information, so if you found your error and resolved it, you may have to cancel out of the Object Repository creation wizard and start it over again so that it will utilize the updated information you’ve provided.

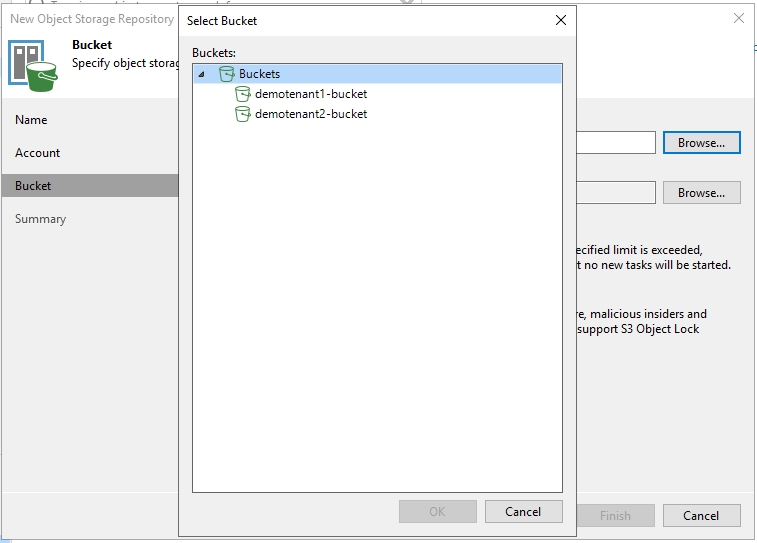

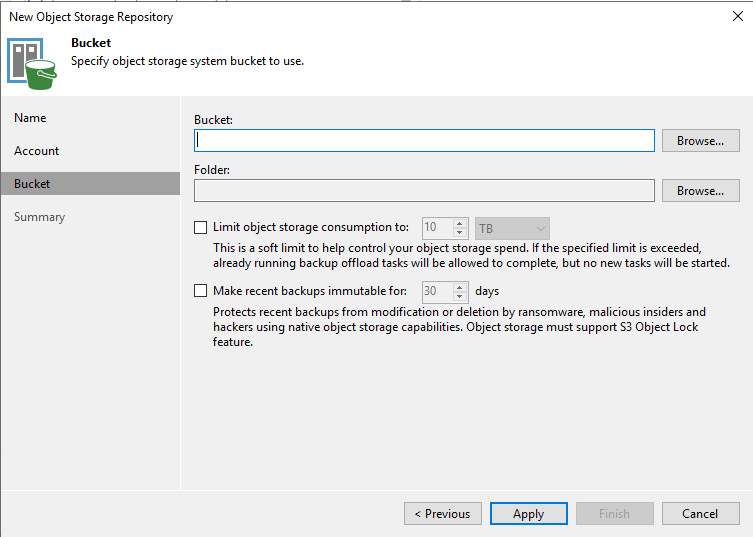

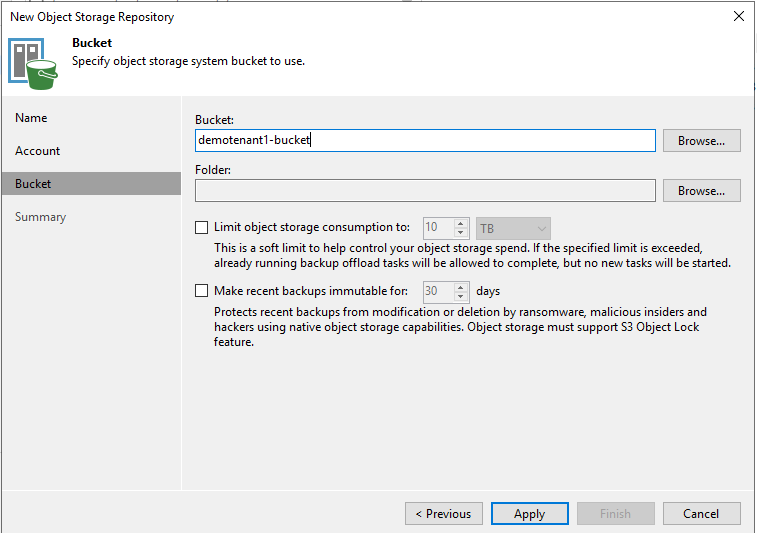

Now that you’ve successfully connected to your Wasabi account, you’ll want to select your bucket. Normally you could click browse and list all buckets, but since we’ve got some policy restrictions in place, you’ll receive an error because it can’t issue the command S3:ListAllBuckets. But that’s okay….we’ll just manually specify the bucket name.

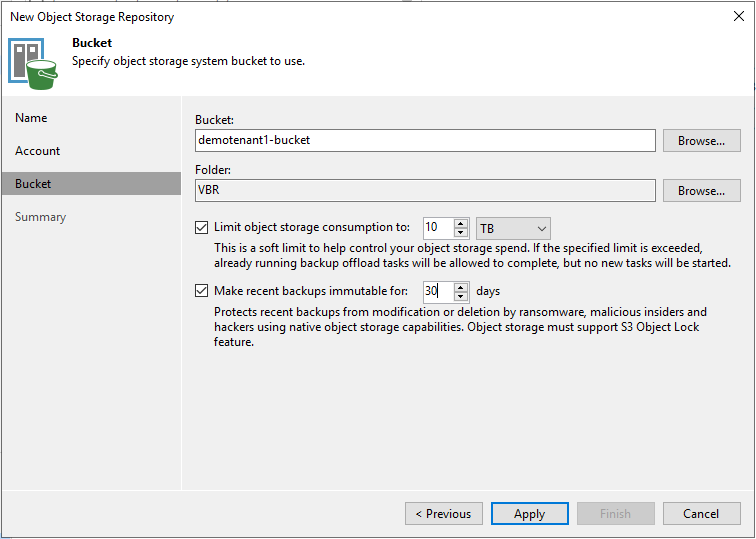

Because we can’t list all of our buckets, we just need to supply our bucket name manually in the Bucket field.

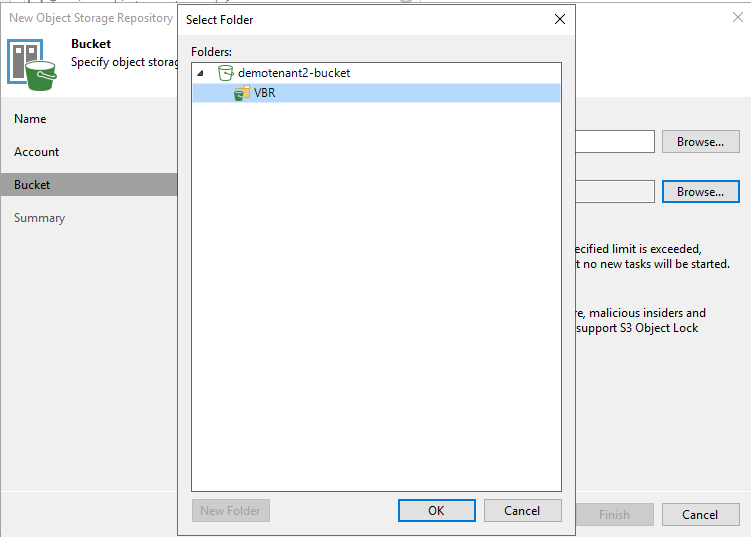

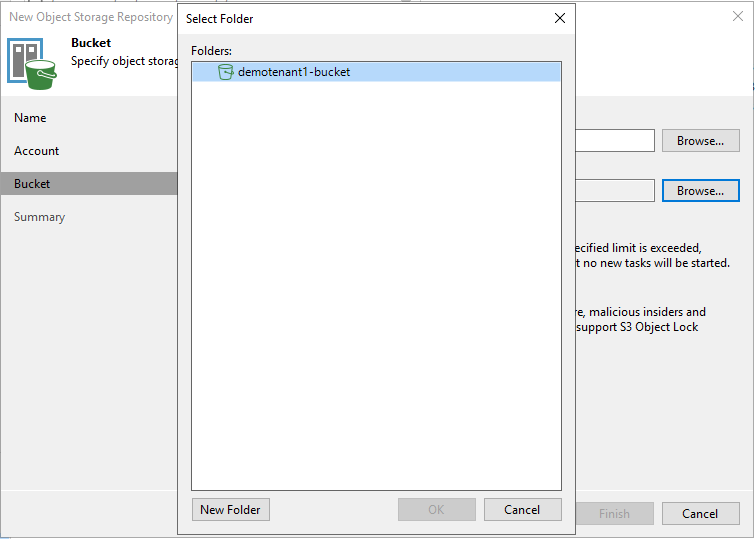

With the bucket name specified, click browse to select a folder. If VBR displays a dialog box with your bucket showing, then congrats, you’ve connected to your bucket successfully.

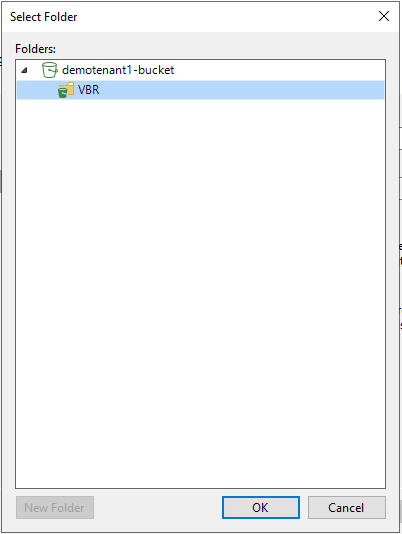

Of course, you should only see your bucket. Click New Folder and specify a folder name for the data to reside. In my case, I’m going to call it VBR. Then click OK.

Back on the Bucket configuration, you can specify a limit on how much data the bucket can use. Note that this is a “soft-limit” meaning that if a job is writing data to the bucket and the bucket reaches the soft-limit, it’ll complete the job successfully, but subsequent jobs will not be able to write data to the bucket unless data is removed to below the limit, or the limit is increased. Unfortunately, this is the only limitation you can set for buckets - nothing within Wasabi. So if your client is only paying for a certain allocation of space, they could in theory increase this limit themselves and get more space, so you’ll want to keep an eye on bucket utilization. If they are allowed to use as much as they like and you’re billing them every month based on space consumed, then this limitation isn’t really needed. In my case, my fictional tenant is only paying for 10TB, so I’m going to specify a 10TB soft-limit.

Also, since we enabled versioning and object-lock on the bucket in Wasabi, you’ll be required to set the number of days you wish to make your backups immutable up to 90 days. In my case, my fictional tenant wants their backup data to be immutable for 30 days. Then click Apply.

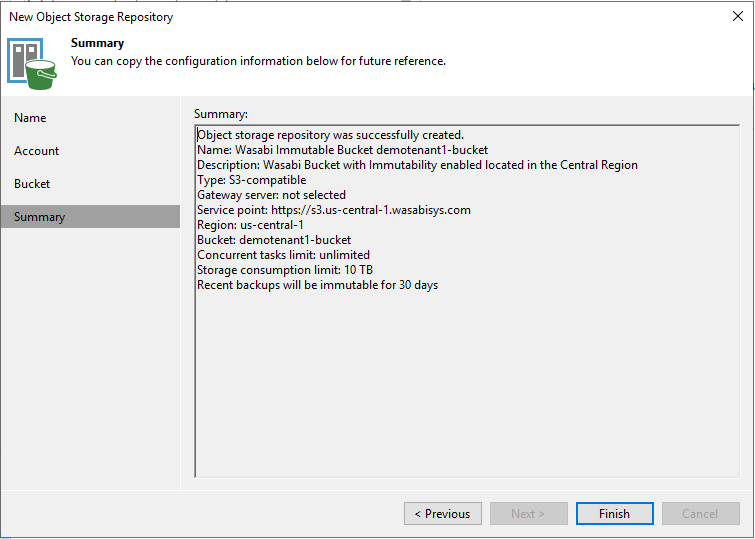

Review the configuration summary, and if you like what you see, click Finish to commit these settings and create your repository.

Success!

Congrats...you’ve successfully created a bucket in your Wasabi account that only your tenant can access, and it’s the only bucket that they can access, just as one would expect in a multi-tenant environment. You can now proceed with creating a Scale-Out Backup Repository, or if you’re rocking v12 (not yet released, but hopefully soon), you should be able to create a backup job to backup directly to your new repository using Direct to Object Storage!

If you have any better suggestions on how to do this, or any corrections to the information I posted, comment below! I’d love you hear your feedback!